The art of conversation: conventional HCI for wheelchair navigation

Human-Computer Interfaces (HCI) may have a major impact on how technology is used. The key idea is that control should be as intuitive as possible or using the machine could become a problem in its own.

Experience with new technology has shown that increased computerization does not guarantee improved human-machine system performance. On the contrary, poor use of technology can result in systems that are difficult to learn or to use and it even may lead to catastrophic errors. This may occur because, while there are typically reductions in physical workload in these cases, mental workload has increased. If edge technology interfaces are used, it is also necessary to take into account the Digital Divide: some persons are simply not ready to deal with new, strange looking gadgets.

Basically, this means that complexity should be handled by the computerized device rather than by the person driving it. Unfortunately, persons with disabilities may present mild or even severe constraints to use physical devices, so interfaces may range from obvious to fairly complex input systems.

Most people are used to either steering wheels or handlebars to operate mobiles, usually depending on whether they have two wheels or more. Indeed, experience shows that vehicles like quads, that present a handlebar interface but, indeed, behave more like cars, might be tricky to drive at first, specially for bike users. Both interfaces, however, are somewhat uncomfortable and too big for wheelchairs. Hence, the most frequent user interfaces in wheelchairs are joysticks, which simply allow the user to direct the mobile towards a chosen direction.

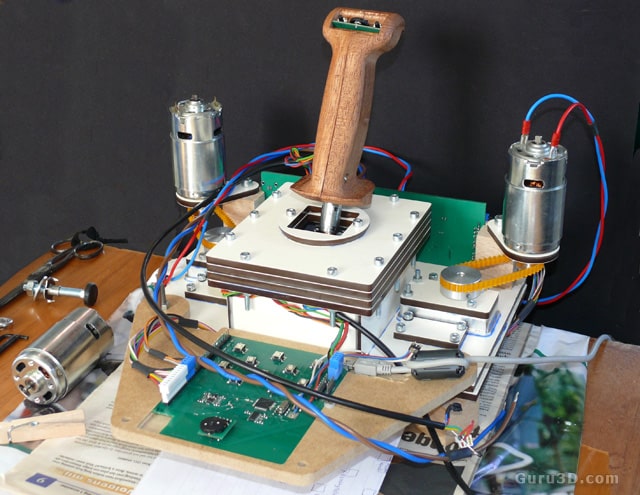

Joysticks are cheap, compact and fairly intuitive, but they only provide direction information and speed is usually prequantified. In order to grant safety, force feedback joysticks have also been used [Urbano, 2005]. These joysticks make it difficult to move towards obstacles, as they include motors to present some resistance depending on how close things are with respect to the mobile platform. They could also favor one or another direction in a similar way if the CPU wants to suggest some particular track. The main advantage of these devices is that HCI becomes bidirectional, meaning that the person might indeed be able to realize that his/her commands are not adequate and correct them on his/her own.

Some experiments try to achieve this effect by providing either color lights or sounds [Cortes et al, 2007], but both techniques are more intrusive than haptic joysticks. In some cases, though, users might be unable to use a joystick, either due to cognitive or physical disabilities. This requires a different approach to navigation control.

If persons can not physically use a joystick at all, some systems rely on touch screens that present a map of the environment. In this case, a goal can be fixed by simply touching the desired goal [Argyros, 2002]. From this point on, autonomous navigation techniques borrowed from classic robotic mobile platforms may take over to calculate and follow a path to the goal.

There are different types of touch screens, both integrated in computers, like UltraMobile or Tablet PC, or external (e.g. Powerbox), either connected to a conventional PC like CINTIQ tablets or attached to a different device like a Nintendo DS, etc that can connect to the PC via WiFi with costs ranging from thousands to a hundred EUR. These interfaces are fairly intuitive and easy to use, but their main drawback is that, after a destination is set, users are not supposed to do much but being transported to the goal. At best, depending on their capabilities, they can be asked to draw on the screen a preferred path. If the user is feeling like it, though, he/she can maneouvre the wheelchair via simple commands like "go ahead" "turn left" "turn right" etc. And why not, everything is better with iPhone xD.

0 comments:

Post a Comment